Octelium as an AI Gateway

Overview

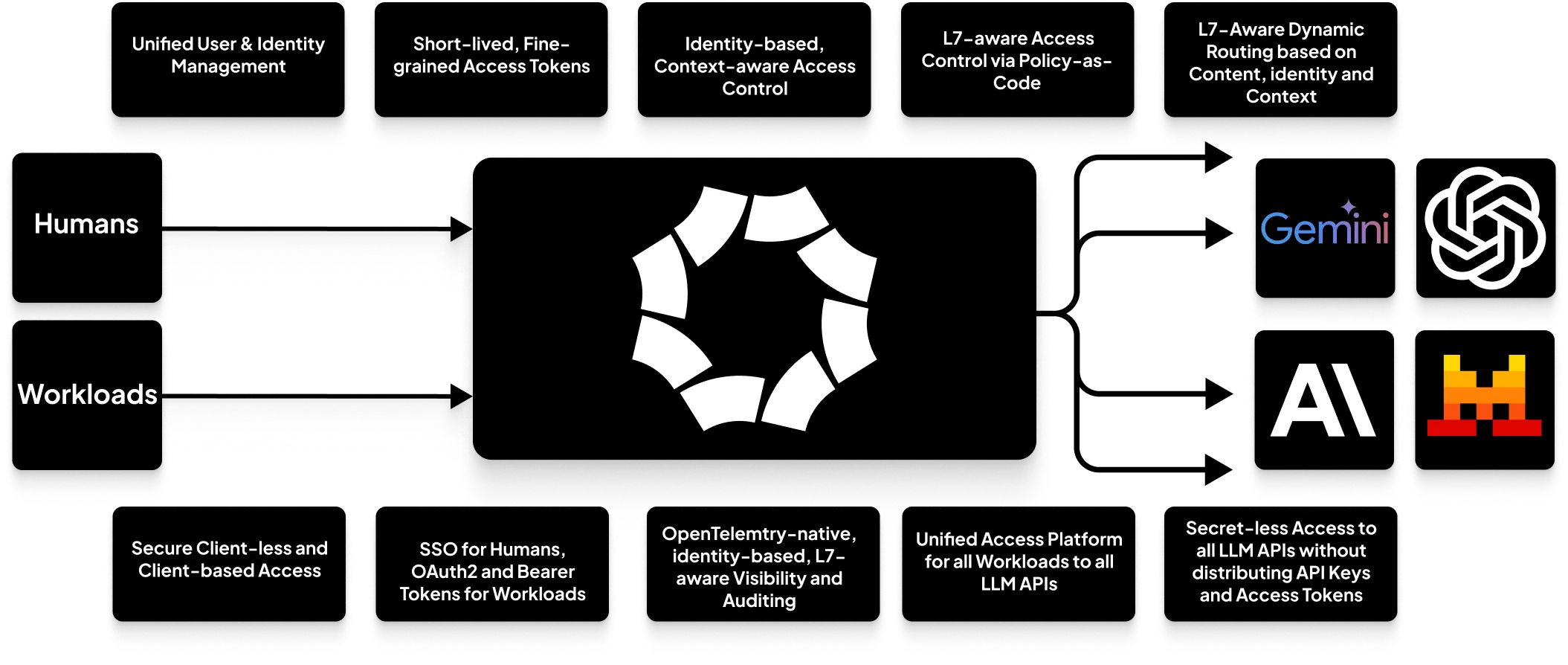

Using Octelium provides a complete self-hosted, open source infrastructure to build your AI gateways to both SaaS as well as self-hosted AI LLM models. When used as an AI gateway, Octelium provides the following:

A unified scalable infrastructure for all your applications written in any programming language, to securely access any AI LLM API in a secretless way without having to manage and distribute API keys and access tokens (read more about secretless access here).

Self-hosted your models via managed containers (read more here). You can see a dedicated example for Ollama here.

Centralized identity-based, application-layer (L7) aware ABAC access control on a per-request basis (read more about access control here).

Unified, scalable identity management for both

HUMANas well asWORKLOADUsers (read more here).Request/output sanitization and manipulation with Lua scripts and Envoy ExtProc compatible interfaces (read more here) to build you ad-hoc rate limiting, semantic caching, guardrails and DLP logic.

OpenTelemetry-native, identity-based, L7 aware visibility and auditing that captures requests and responses including serialized JSON body content.

GitOps-friendly declarative, programmable management (read more here).

A Simple Gateway

This is a simple example where you can have a Gemini API Service, publicly exposed (read more about the public clientless BeyondCorp mode here). First we need to create a Secret for the CockroachDB database's password as follows:

octeliumctl create secret gemini-api-keyNow we create the Service for the Gemini API as follows:

kind: Service

metadata:

name: gemini

spec:

mode: HTTP

isPublic: true

config:

upstream:

url: https://generativelanguage.googleapis.com

http:

path:

addPrefix: /v1beta/openai

auth:

bearer:

fromSecret: gemini-api-keyYou can now apply the creation of the Service as follows (read more here):

octeliumctl apply /PATH/TO/SERVICE.YAMLClient Side

Octelium enables authorized Users (read more about access control here) to access the Service both via the client-based mode as well as publicly via the clientless BeyondCorp mode (read more here). In this guide, we are going to use the clientless mode to access the Service via the standard OAuth2 client credentials in order for your workloads that can be written in any programming language to access the Service without having to use any special SDKs or have access to external clients All you need is to create an OAUTH2 Credential as illustrated here. Now, here is an example written in Typescript:

import OpenAI from "openai";

import { OAuth2Client } from "@badgateway/oauth2-client";

async function main() {

const oauth2Client = new OAuth2Client({

server: "https://<DOMAIN>/",

clientId: "spxg-cdyx",

clientSecret: "AQpAzNmdEcPIfWYR2l2zLjMJm....",

tokenEndpoint: "/oauth2/token",

authenticationMethod: "client_secret_post",

});

const oauth2Creds = await oauth2Client.clientCredentials();

const client = new OpenAI({

apiKey: oauth2Creds.accessToken,

baseURL: "https://gemini.<DOMAIN>",

});

const chatCompletion = await client.chat.completions.create({

messages: [

{ role: "user", content: "How do I write a Golang HTTP reverse proxy?" },

],

model: "gemini-2.0-flash",

});

console.log("Result", chatCompletion);

}Dynamic Routing

You can also route to a certain LLM provider based on the content of the request body (read more about dynamic configuration here), here is an example:

kind: Service

metadata:

name: total-ai

spec:

mode: HTTP

isPublic: true

config:

upstream:

url: https://generativelanguage.googleapis.com

http:

enableRequestBuffering: true

body:

mode: JSON

path:

addPrefix: /v1beta/openai

auth:

bearer:

fromSecret: gemini-api-key

dynamicConfig:

configs:

- name: openai

upstream:

url: https://api.openai.com

http:

path:

addPrefix: /v1

auth:

bearer:

fromSecret: openai-api-key

- name: deepseek

upstream:

url: https://api.deepseek.com

http:

path:

addPrefix: /v1

auth:

bearer:

fromSecret: deepseek-api-key

rules:

- condition:

match: ctx.request.http.bodyMap.model == "gpt-4o-mini"

configName: openai

- condition:

match: ctx.request.http.bodyMap.model == "deepseek-chat"

configName: deepseek

# Fallback to the default config

- condition:

matchAny: true

configName: defaultFor more complex and dynamic routing rules (e.g. message-based routing), you can use the full power of Open Policy Agent (OPA) (read more here).

Access Control

When it comes to access control, Octelium provides a rich layer-7 aware, identity-based, context-aware ABAC access control on a per-request basis where you can control access based on the HTTP request's path, method, and even serialized JSON using policy-as-code with CEL and Open Policy Agent (OPA) (You can read more in detail about Policies and access control here). Here is a generic example:

kind: Service

metadata:

name: my-api

spec:

mode: HTTP

config:

upstream:

url: https://api.example.com

http:

enableRequestBuffering: true

body:

mode: JSON

authorization:

inlinePolicies:

- spec:

rules:

- effect: ALLOW

condition:

all:

of:

- match: ctx.user.spec.groups.hasAll("dev", "ops")

- match: ctx.request.http.bodyMap.messages.size() < 4

- match: ctx.request.http.bodyMap.model in ["gpt-3.5-turbo", "gpt-4o-mini"]

- match: ctx.request.http.bodyMap.temperature < 0.7This was just a very simple example of access control for an OpenAI-compliant LLM API. Furthermore, you can use Open Policy Agent (OPA) to create much more complex access control decisions.

Request/Response Manipulation

You can also use Octelium's plugins, currently Lua scripts and Envoy's ExtProc, to sanitize and manipulate your request and responses (read more about HTTP plugins here). Here is an example:

kind: Service

metadata:

name: safe-gemini

spec:

mode: HTTP

isPublic: true

config:

upstream:

url: https://generativelanguage.googleapis.com

http:

enableRequestBuffering: true

body:

mode: JSON

path:

addPrefix: /v1beta/openai

plugins:

- name: main

condition:

match: ctx.request.http.path == "/chat/completions" && ctx.request.http.method == "POST"

lua:

inline: |

function onRequest(ctx)

local body = json.decode(octelium.req.getRequestBody())

if body.temperature > 0.7 then

body.temperature = 0.7

end

if body.model == "gemini-2.5-pro" then

body.model = "gemini-2.5-flash"

end

if #body.messages > 4 then

octelium.req.exit(400)

return

end

body.messages[0].role = "system"

body.messages[0].content = "You are a helpful assistant that provides concise answers"

for idx, message in ipairs(body.messages) do

if strings.lenUnicode(message.content) > 500 then

octelium.req.exit(400)

return

end

end

if body.temperature > 0.4 then

local c = http.client()

c:setBaseURL("http://guardrail-api.default.svc")

local req = c:request()

req:setBody(json.encode(body))

local resp, err = req:post("/v1/check")

if err then

octelium.req.exit(500)

return

end

if resp:code() == 200 then

local apiResp = json.decode(resp:body())

if not apiResp.isAllowed then

octelium.req.exit(400)

return

end

end

end

if strings.contains(strings.toLower(body.messages[1].content), "paris") then

local c = http.client()

c:setBaseURL("http://semantic-caching.default.svc")

local req = c:request()

req:setBody(json.encode(body))

local resp, err = req:post("/v1/get")

if err then

octelium.req.exit(500)

return

end

if resp:code() == 200 then

local apiResp = json.decode(resp:body())

octelium.req.setResponseBody(json.encode(apiResp.response))

octelium.req.exit(200)

return

end

end

octelium.req.setRequestBody(json.encode(body))

endObservability

Octelium also provides OpenTelemetry-ready, application-layer L7 aware visibility and access logging in real time (see an example for HTTP here) that includes capturing request/response serialized JSON body content (read more here). You can read more about visibility here.

Here are a few more features that you might be interested in:

Routing not just by request paths, but also by header keys and values, request body content including JSON (read more here).

Request/response header manipulation (read more here).

Cross-Origin Resource Sharing (CORS) (read more here).

Secretless access to upstreams and injecting bearer, basic, or custom authentication header credentials (read more here).

Application layer-aware ABAC access control via policy-as-code using CEL and Open Policy Agent (read more here).

OpenTelemetry-ready, application-layer L7 aware auditing and visibility (read more here).